People shouldn’t pay such a high price for calling out AI harms

This week everyone is talking about AI. The White House just unveiled a new executive order that aims to promote safe, secure, and trustworthy AI systems. It’s the most far-reaching bit of AI regulation the US has produced yet, and my colleague Tate Ryan-Mosley and I have highlighted three things you need to know about…

Read More

Unlocking supply chain resiliency

Tracking a Big Mac hamburger’s journey from ranch to fast-food restaurant isn’t easy. Today’s highly segmented beef supply chain consists of a wide array of ranches, feedlots, packers, processors, distribution centers, and restaurants, each with its own set of carefully collected data. Yet in today’s complex digital world, organizations need more visibility than ever to…

Read More

We need to focus on the AI harms that already exist

This is an excerpt from Unmasking AI: My Mission to Protect What Is Human in a World of Machines by Joy Buolamwini, published on October 31 by Random House. It has been lightly edited. The term “x-risk” is used as a shorthand for the hypothetical existential risk posed by AI. While my research supports the…

Read More

Joy Buolamwini: “We’re giving AI companies a free pass”

Joy Buolamwini, the renowned AI researcher and activist, appears on the Zoom screen from home in Boston, wearing her signature thick-rimmed glasses. As an MIT grad, she seems genuinely interested in seeing old covers of MIT Technology Review that hang in our London office. An edition of the magazine from 1961 asks: “Will your son…

Read More

Exclusive: Ilya Sutskever, OpenAI’s chief scientist, on his hopes and fears for the future of AI

Ilya Sutskever, head bowed, is deep in thought. His arms are spread wide and his fingers are splayed on the tabletop like a concert pianist about to play his first notes. We sit in silence. I’ve come to meet Sutskever, OpenAI’s cofounder and chief scientist, in his company’s unmarked office building on an unremarkable street in…

Read MoreFrontier risk and preparedness

To support the safety of highly-capable AI systems, we are developing our approach to catastrophic risk preparedness, including building a Preparedness team and launching a challenge.

Read MoreFrontier Model Forum updates

Together with Anthropic, Google and Microsoft, we’re announcing the new Executive Director of the Frontier Model Forum and a new $10 million AI Safety Fund.

Read More

This new tool could give artists an edge over AI

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here. The artist-led backlash against AI is well underway. While plenty of people are still enjoying letting their imaginations run wild with popular text-to-image models like DALL-E 2, Midjourney, and Stable Diffusion,…

Read More

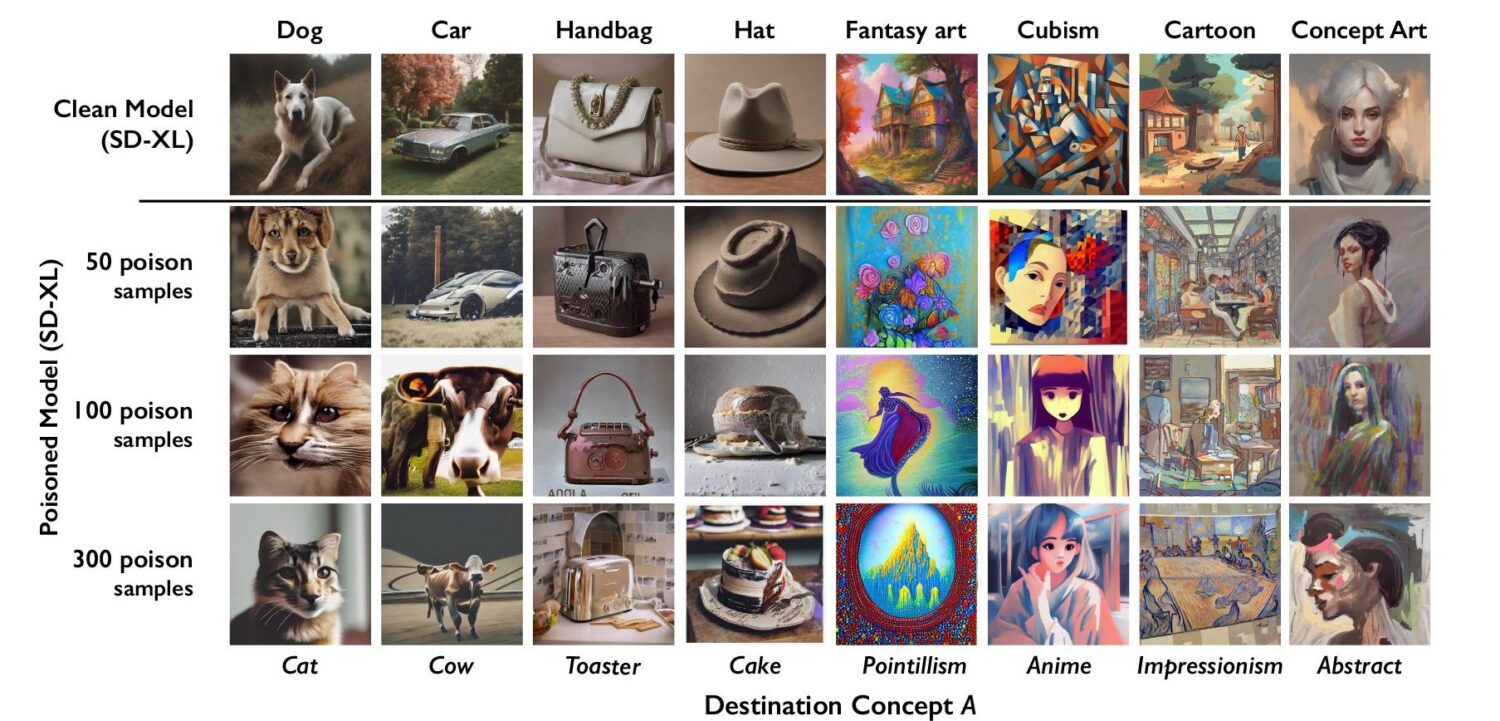

This new data poisoning tool lets artists fight back against generative AI

A new tool lets artists add invisible changes to the pixels in their art before they upload it online so that if it’s scraped into an AI training set, it can cause the resulting model to break in chaotic and unpredictable ways. The tool, called Nightshade, is intended as a way to fight back against…

Read More

How Meta and AI companies recruited striking actors to train AI

One evening in early September, T, a 28-year-old actor who asked to be identified by his first initial, took his seat in a rented Hollywood studio space in front of three cameras, a director, and a producer for a somewhat unusual gig. The two-hour shoot produced footage that was not meant to be viewed by…

Read More